SuLMaSS project status

Outcomes

WP1 Persistent access to research software

Expected Outcome: Automated process to obtain a re-usable and citable representation of the software in the form of an archival package to be stored in a sustainable research data infrastructure, i.e. repository.

The process was implemented and is publicly available as open source for reuse by others. It is documented in a manuscript that is currently under review and available as a preprint.

Expected Outcome: Concept for sustainable operation of the infrastructure built during SuLMaSS (including WP2 & WP3).

The requirements for sustained operation are described in the sustainability concept.

WP2 Continuous testing and deployment infrastructure

Expected Outcome: General testing environment usable for a broad range of scientific software packages. Small atomic test steps help to maintain and extend sustainable test sets.

Autotester can use arbitrary external validation tools to integrate with any data format and software. Test steps can be defined in an XML-based configuration format. The user is free to define atomic or coarse-grained test cases. Autotester is publicly available under the GPLv3 license togehter with comprehensive documentation.

WP3 Community interaction infrastructure

Expected Outcome: Extended service connecting different aspects of software development and research, tailored to the needs of research software communities.

A community interaction infrastructure based on GitLab was set up and is used for openCARP development. The central entry point is the openCARP web page which links to the GitLab instance, the Question&Answer system and integrates the documentation, the video tutorial from Youtube etc.

WP4 Needs analysis and software design, SW management

Expected outcome: Detailed overview of existing software components

Documented in the openCARP paper.

Expected outcome: Analysis of needs and wishes of potential users based on questionnaire and in-person discussion

A comprehensive survey was performed and in-depth discussions with selected users were held and summarized in the openCARP paper.

Expected outcome: Broad-based, community-driven consensus requirement specification

The requirements are documented in the openCARP paper (section 1.3 and 2).

Expected outcome: Software architecture description

The software architecture description was integrated in the code documentation and is displayed on the front page of the openCARP doxygen page.

Expected outcome: Implementation and deployment plan

Planning was done using the project management functionality of GitLab (e.g. Milestone planning). The general approach is described in the openCARP paper.

Expected outcome: Software management plan

The continuously updated software management plan (SMP) is version controlled and automatically synchronized with the webpage.

WP5 Design and setup of test cases for openCARP

Expected outcome: Automated unit tests

As openCARP is based on the established carpentry/CARP software, we considered the effort of implementing unit tests for the entire codebase not in a good balance to the added value. This decision was supported by the fact that even the norm for software lifecylce processes for medical devices (or software as a medical device), IEC 62304, does not strictly require unit tests.

Expected outcome: Automated high-level tests for all relevant use cases identified in WP4

55 tests were implemented using autotester and integrated in the continuous integration pipelines. Test reports can be accessed form within GitLab and are also integrated in the web page.

Expected outcome: Statistics on test coverage and success rates

Test success rates are included in the test reports (see previous outcome). Our current testing environment does not allow providing test coverage rates.

WP6 Implementation of openCARP

Expected outcome: Next generation of the cardiac electrophysiology simulator openCARP designed for sustainability and API for reusability (code fully reviewed)

First public version of the verified openCARP simulator was released on March 1, 2020. The carputils Python interface is published as well and basic documentation (to be extended).

Expected outcome: User-friendly interface to facilitate first steps, increase productivity and reproducibility

We developed a web-based frontend to run the example experiments. The web-based GUI can be installed locally as a Docker container. In the future, we plan to also provide an instance running on our webserver to further lower the entry threshold.

To increase reproducibiltiy, we have established a streamlined workflow in carputils that enables easy bundling and sharing of experiments (and optionally results as well). The workflow is documented in the repository and presented on the web page.

Expected outcome: Comprehensive code documentation

Besides the user manual and simulator parameter definition, we expose the code documentation for openCARP and carputils on the webpage. The carputils documentation should be further extended in the future.

WP7 User documentation

Expected outcome: 20 video tutorials with an average length of 10 minutes

21 videos are published on our YouTube channel and embedded on the web page. In total, they cover more than 5 hours of material. The comprehensive introductory videos are longer while more specific topics are covered in shorter videos.

Expected outcome: Topical introductions

30 documented examples covering the full spectrum of openCARP's functionality are online on the web page.

Expected outcome: Comprehensive reference documentation of the software and its API

Full documentation of all parameters (commandline, PDF and online). Core code documentation for openCARP. carputils documentation still rudimentary.

WP8 User support and interaction, dissemination

Expected outcome: Project website with entry page, marketing video, and interactive support platform

The project website is online as the central entry point to all openCARP related information and infrastructure including the interactive support platform. We did not produce a marketing video yet as other efforts were prioritized.

Expected outcome: Two user meetings

One onsite user meeting took place in September 2021 in Karlsruhe. We hosted more than 30 persons, which was the maximum feasible on university premises at that time (COVID-19 regulations). Due to travelling and meeting limitations in 2020, the other user meeting that was intially planned as an in-person event was replaced by 2 online meetings (November 2020 and April 2021) with more than 90 participants. An online contributor meeting took place in March 2022.

Expected outcome: Presentation of the software at minimum 5 conferences

We presented openCARP with dedicated talks, slides in a talk or informal conversations at several conferences including CompBioMed (10-12 June 19, Sendai City, Japan), ICIAM (15-19 July 19, Valencia), TRM (8-10 December 19, Lugano, Switzerland), GRC Cardiac Arrhythmia Mechanisms (31 March - 5 April, Lucca, Italy), EMBC (23-27 July 19, Berlin), BMT (25-26 September 19, Frankfurt), Uncertainty quantification (5-7 June 19, Cambridge, UK), FIMH (6-8 June 19, Bordeaux, France), RISM iHEART (22-24 July 19, Varese, Italy), Cardiac MEC (4-7 September 19, Freiburg) CinC (8-11 September 19, Singapore) Cardiac Physiome (4-7 December 19, Maastricht, The Netherlands), VPH (26-29 August 20, Paris, France), CinC (13-16 September 20, Rimini, Italy). Followed by numerous online conferences in 2020 and 2021.

The generic research software infrastructure developed during SuLMaSS was presented at conferences including eScience Tage (27-29 March 19, Heidelberg, Germany), deRSE (4-6 June 19, Potsdam, Germany), RDA Plenary 2019.

Evaluation

WP1 Persistent access to research software

The tool chain successfully automates the creation of a SIP and ingestion in a repository.

The implementation is now in productive use, SIP packages are published in the repository RADAR4KIT together with metadata and gets a DOI for each published version. See doi:10.35097/542 for an example. The pipeline is publicly available for reuse by other software projects.

WP2 Continuous testing and deployment infrastructure

The outcome will be evaluated by the number of working test suites for different modules of the tested scientific software packages (Pace3D and openCARP).

There are existing test cases for Pace3D and openCARP tests are integrated with Autotester.

WP3 Community interaction infrastructure

Successful deployment, dissemination, and availability of the infrastructure extensions. Usage and user acceptance will be tracked through a survey and by counting access.

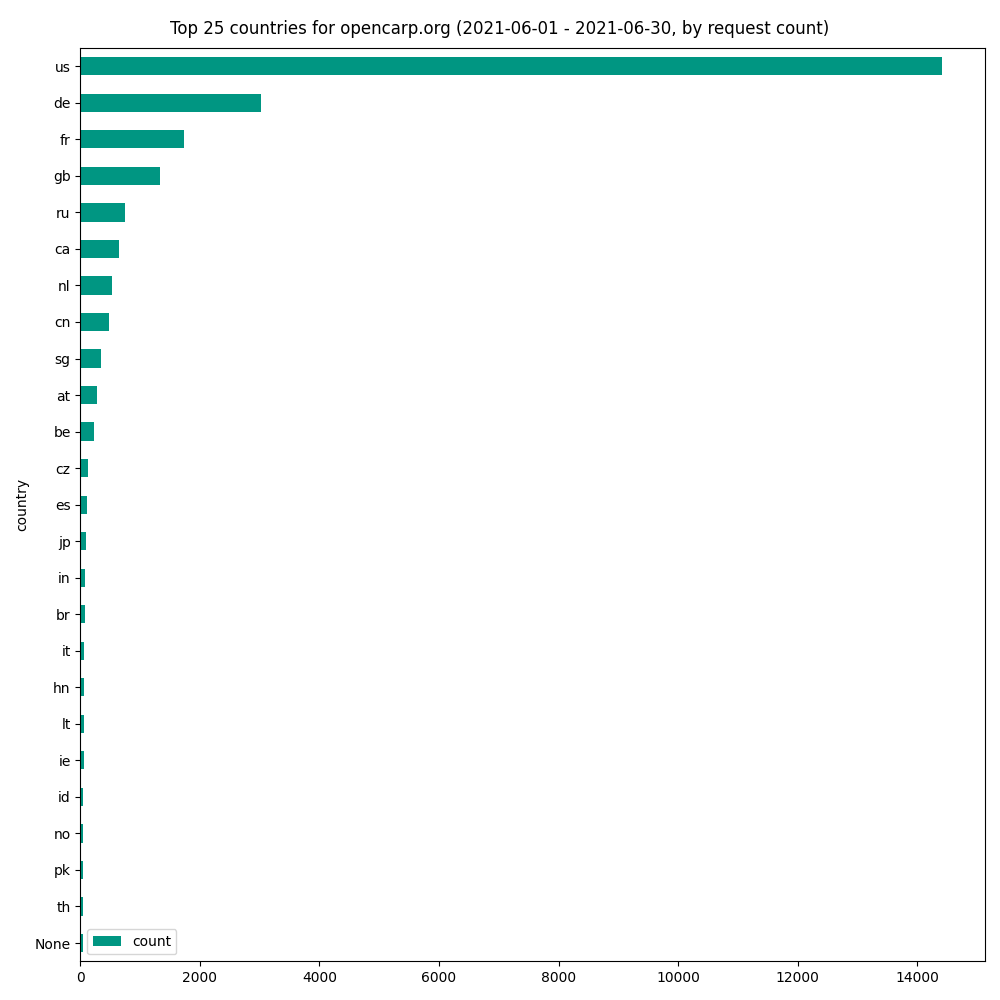

We have around 8,000 accesses to the web page per month. The number of individual requests is even higher. The figure below shows the numbers for June 2021 together with the distribution by country.

Moreover, we collected feedback during the user meetings in various forms. This feedback was informed to prioritize developments, improve the design of the user meetings and the plans described in the CARPe-diem proposal.

WP4 Needs analysis and software design, SW management

The needs analysis will be based on at least 10 in-person discussions and 40 questionnaires; Software engineering experts will evaluate the architecture, implementation, deployment, and software management plans and provide feedback.

Done. See outcome description.

WP5 Design and setup of test cases for openCARP

We will assess test coverage levels and success rates and review arising bugs regarding their coverage by test cases.

The test suite is run automatically on each commit through continuous integration pipelines. Fixes are done within days.

WP6 Implementation of openCARP

The functionality of the implemented software package will be compared against the requirements defined in WP4 and the test users give an initial survey then re-survey every 12 months.

Regular user meeting feedback integrated (see also WP3).

WP7 User documentation

We aim for at least 80% positive votes for the video tutorials; we will review comments regarding the videos after 2 years to plan updated versions. We aim for at least 80% positive votes for the documentation; all comments to the documentation will be addressed within 1 week; we will perform a survey about satisfaction regarding user documentation after 2 years.

We got few but exclusively positive votes on the Youtube videos. The survey was conducted during the user meetings (see also WP3). The feedback on the documentation mainly reached us through questions in the Q2A system.

WP8 User support and interaction, dissemination

We aim at a minimum of 20 unique visitors of the project web page per month, 50 downloads, and 30 active users in the Question2Answer system after 2 years. We aim to respond to at least 90% of all questions within 2 days, 50% of all questions should have an accepted answer within a month, 80% within half a year. The user meetings shall each be attended by at least 20 participants from at least 8 institutions.

The numbers are higher than expected (see WP3 and figure above). Some measures planned to evaluate initially were unfortunately not possible with reasonable effort (e.g. unique visitors without relying on cookies, response time). 66 users posted on the Q2A system. 183 questions received a total of 212 answers and 288 additional comments. There are no unanswered questions currently. All user meetings (both online and onsite) were attended by more than 30 participants from more than 13 institutions per meeting.